22/04/2024

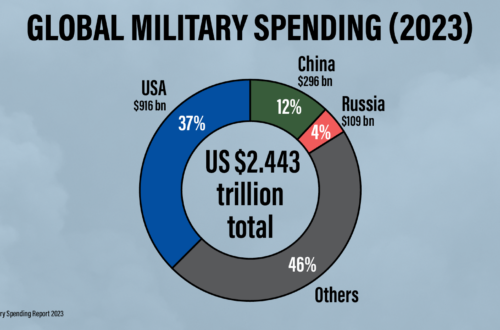

The world spent 2.44 trillion $ in the military in 2023, according to new data published today by SIPRI.

Today (Monday 22) is the most important day of this year's Global Days of Action on Military Spending (GDAMS), with several events taking place all across the world.SIPRI has just published new data on military spending for the year 2023, and the figures show very strong growth in military spending,...

22/04/2024

UNODA’s Statement on the Global Days of Action on Military Spending

Izumi Nakamitsu, High Representative for Disarmament Affairs, issued the following message on the occasion of the 2024 Global Days of...

12/04/2024

GDAMS 2024 Statement · War Costs Us The Earth

Disarmament now to save people and planet Humanity is at a crossroads where political decisions on defence budgets will determine...

12/04/2024

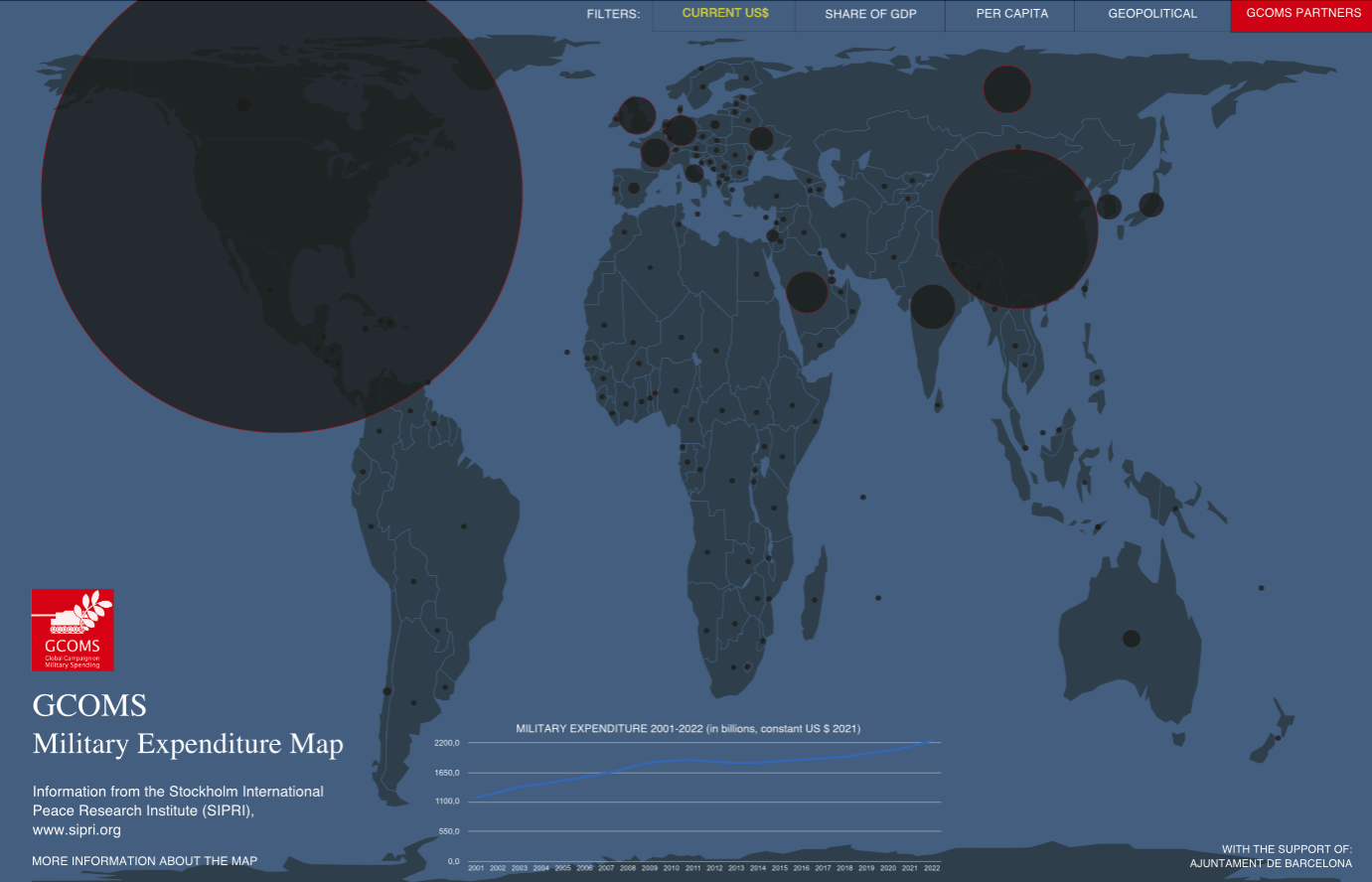

Check out our interactive military spending map!

Discover the new GCOMS campaign tool Create your own infographics Find out about your country's military spending Compare military spending...

21/03/2024

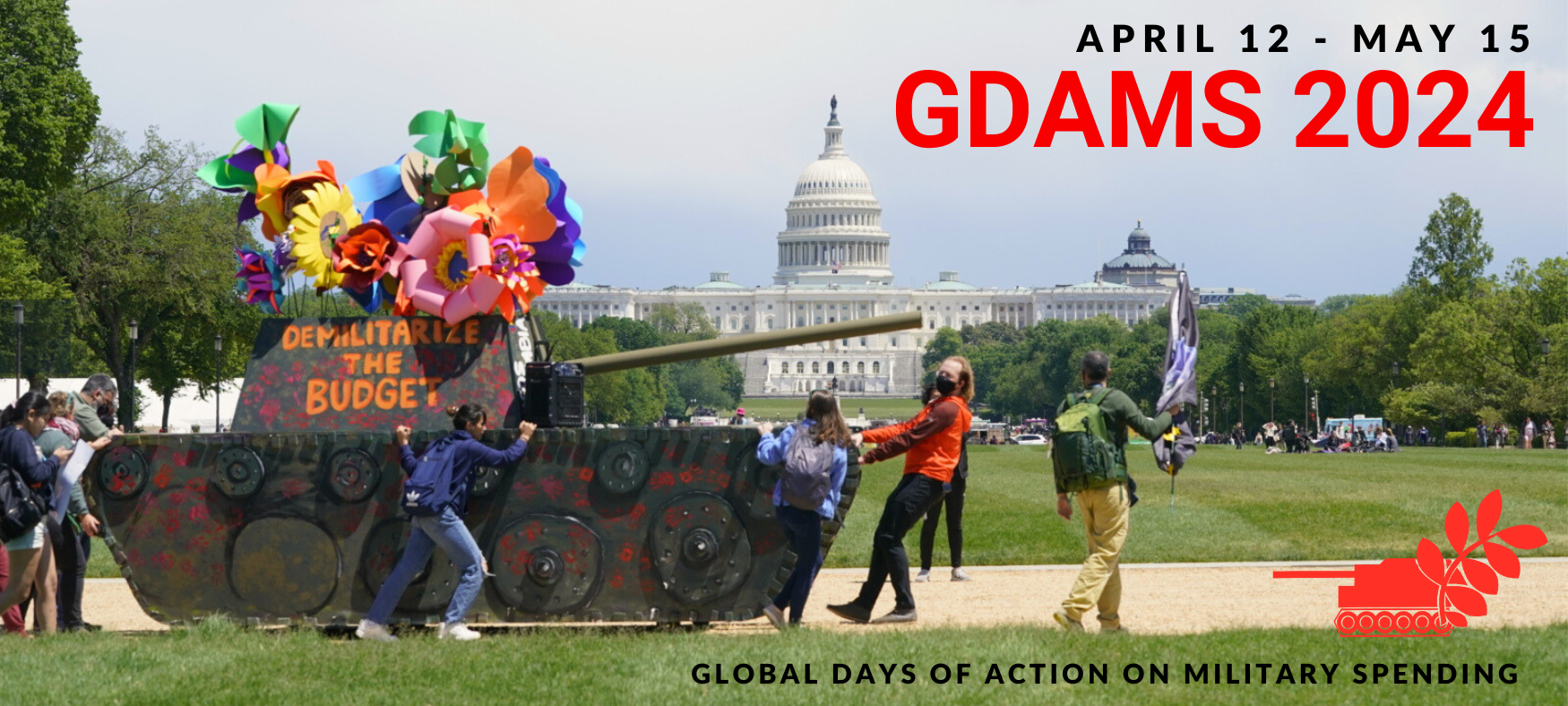

Save the dates for GDAMS 2024: April 12 to May 15

We are witnessing the dramatic consequences of escalating global militarization, evident in the numerous armed conflicts around the world, notably...